The Misunderstood Reality of AI Governance

Most organizations believe it is about writing policies.

It isn’t.

Policies are documentation. Governance is control.

As artificial intelligence becomes deeply embedded into operational workflows — influencing hiring decisions, lending approvals, content moderation, automation, forecasting, and strategic decision‑making — organizations are no longer governing a tool.

They are governing scalable decision power.

This distinction is critical. Traditional governance frameworks were designed for static systems. AI systems, however, are dynamic, adaptive, and capable of influencing decisions at scale. As a result, governance must evolve from documentation-based oversight to operational control.

Most companies are not prepared for this shift.

This guide is not theoretical. Instead, it focuses on implementing governance where AI already has operational influence. The goal is to establish governance as a structural control mechanism — not a compliance exercise.

Stop Governing Models. Start Governing Decision Rights

One of the most common mistakes organizations make is focusing governance efforts on auditing models.

Bias tests. Fairness reports. Explainability dashboards.

While these tools are valuable, they do not govern power.

Models do not hold authority. Decisions do.

Therefore, effective governance begins by mapping decision rights. This process identifies how AI systems influence authority structures within an organization.

When evaluating governance, consider the following questions:

- What decision does this system influence?

- Was this previously a human-controlled decision?

- Has authority quietly shifted?

- Can that authority be reversed?

If AI outputs materially change who gets approved, hired, flagged, prioritized, funded, or published, governance must anchor at that decision point.

In other words, governance must focus on authority transitions — not just technical performance.

If organizations cannot trace how model outputs alter authority, they are not governing. They are simply observing.

Identify Execution Bottlenecks

Governance rarely fails at the ethical level. Instead, it fails at operational bottlenecks.

Every AI system contains control points that determine how authority flows. These operational chokepoints represent the true levers of governance.

Every AI system contains control points that determine how authority flows. These operational chokepoints represent the true levers of governance.

Common execution bottlenecks include:

- Deployment approval authority

- API access control

- Compute allocation

- Model retraining permissions

- Production update authority

- Override power

These control points determine who actually governs AI systems.

Therefore, organizations should treat these bottlenecks as governance levers. For each chokepoint, governance should require:

- Named ownership

- Logged activity

- Escalation triggers

- Audit visibility

If deployment authority sits with the same team measured on speed, governance becomes compromised. In that scenario, organizations are not governing AI — they are accelerating it

Map Incentive Conflicts Before They Break Governance

Governance structures often collapse under incentive pressure.

Different teams operate under different priorities:

- Product teams prioritize speed

- Safety teams prioritize caution

- Finance teams prioritize scale

- Executives prioritize competitive advantage

If governance frameworks fail to account for these conflicting incentives, governance becomes symbolic.

To address this challenge, organizations should implement three structural safeguards:

Conflict Mapping

Explicitly document where safety and revenue goals conflict. Identifying these pressure points early prevents governance breakdowns.

Escalation Authority

Define who has the authority to block deployment when risks emerge. Governance without blocking power lacks effectiveness.

Metric Integration

Tie safety performance to executive KPIs. When safety affects performance metrics, governance becomes operational.

Without incentive integration, governance becomes decoration rather than control.

Replace Static Policies with Capability Triggers

AI systems evolve faster than governance documents. Static policies quickly become outdated.

Instead of rigid documentation, organizations should implement capability-based governance triggers.

For example, when a system transitions from advisory output to autonomous execution, governance requirements should automatically escalate.

Capability triggers may include:

- Financial transaction authority

- Real-time publishing without human review

- API control over external systems

- Cross-dataset fusion

- Self-improving loops

When system capability crosses a threshold, oversight should increase accordingly.

Governance must scale with system power — not annual policy updates.

Govern Data Flow, Not Just Data Privacy

Most governance conversations focus primarily on data privacy. However, AI risk often emerges from data movement rather than storage.

Effective governance therefore requires visibility into data flow.

Organizations should examine:

- How outputs feed back into training pipelines

- Whether synthetic data contaminates future models

- How departments combine datasets

- Whether outputs are reused in unintended contexts

Data lineage becomes a governance mechanism.

Without visibility into how information flows through AI ecosystems, organizations amplify risk without awareness.

Install a Shadow Audit Layer

Traditional internal audits are predictable. Predictable audits often produce optimized behavior rather than genuine compliance.

To counter this limitation, organizations should implement shadow auditing.

Shadow auditing may include:

- Independent red teams

- Randomized stress testing

- Scenario simulation

- External technical reviewers

The purpose is not punitive. Instead, shadow auditing creates behavioral realism.

If systems behave safely only under scheduled review, they are not genuinely safe.

Design Liability Architecture Early

AI systems blur accountability boundaries. When AI systems influence decisions, responsibility becomes unclear.

Organizations must define liability architecture before scaling AI deployments.

This includes:

- Ownership registries

- Action logs tied to human oversight

- Legal review triggers

- Accountability chains

Ambiguity creates diffusion of responsibility. Clear liability architecture strengthens governance effectiveness.

Monitor Compute as a Governance Signal

Compute is rarely treated as a governance variable. However, compute trends often signal capability expansion.

Sharp increases in training intensity, inference volume, or fine‑tuning cycles frequently precede system capability shifts.

Governance dashboards should therefore include:

- Compute scaling trends

- Threshold alerts

- Review triggers tied to performance leaps

Monitoring compute provides early warning signals for governance adjustments.

Make Human Override Structurally Real

Human‑in‑the‑loop mechanisms often exist only in documentation. Effective override systems require structural independence.

True override mechanisms include:

- Immediate kill switches

- Independent authority holders

- Protected escalation channels

- Logged override decisions

If the deploying team controls override authority, governance becomes compromised.

Oversight must remain structurally independent.

Design for Governance Drift

Governance frameworks degrade over time. Teams adopt shortcuts, new tools bypass controls, and informal processes emerge.

To prevent governance erosion, organizations should implement drift detection:

- Random compliance sampling

- Tool inventory audits

- Anonymous reporting channels

- Quarterly revalidation of authority structures

Governance must be maintained like infrastructure — continuously and proactively.

Treat AI as Infrastructure, not a Feature

This mindset shift fundamentally changes governance strategy.

If AI influences core decision systems, it becomes infrastructure rather than a feature.

Infrastructure governance requires:

- Executive-level oversight

- Budget-linked accountability

- Clear authority boundaries

- Structural audit mechanisms

- Escalation architecture embedded in operations

Once intelligence scales, it becomes power. Power requires structured governance.

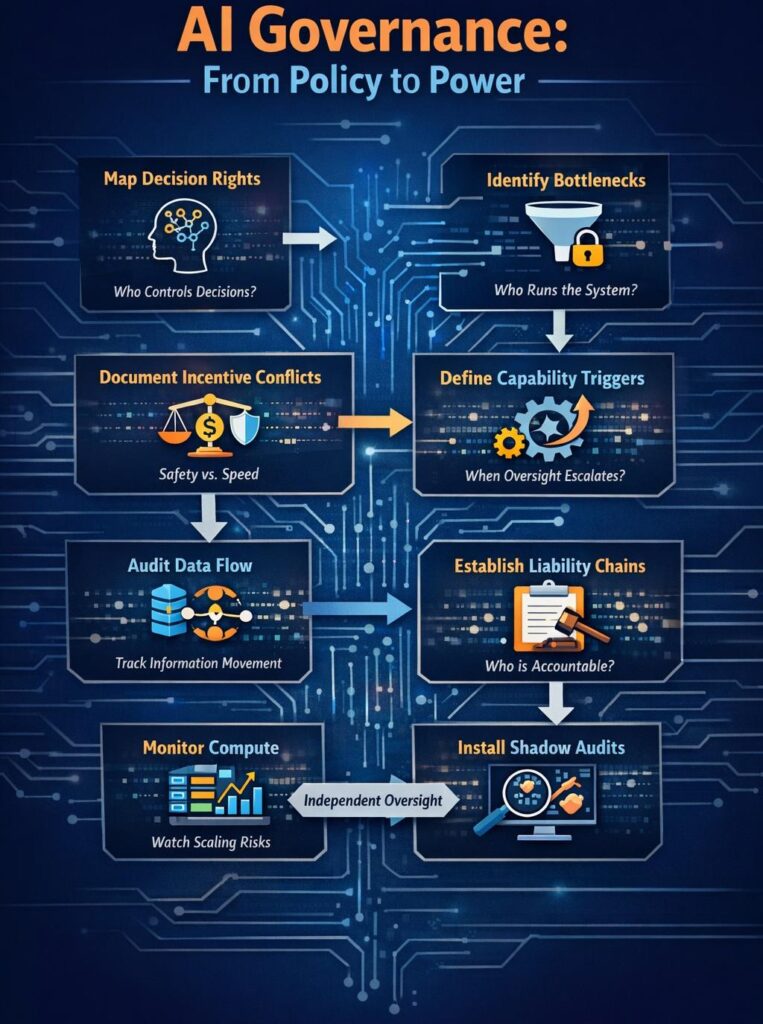

Implementation Sequence for Operational Governance

When implementing, the following sequence provides a practical framework:

- Map decision rights

- Identify execution bottlenecks

- Document incentive conflicts

- Define capability thresholds

- Audit data flow

- Establish liability architecture

- Integrate compute monitoring

- Assign independent override authority

- Deploy shadow auditing

- Install drift detection systems

This sequence transforms governance from theoretical alignment into operational architecture.

Final Perspective: Governance Is Power Allocation

Most organizations ask:

“How do we use AI responsibly?”

However, a more important question emerges:

“How do we control scalable decision systems once they are embedded into operational infrastructure?”

It is not a compliance exercise. Instead, it is a power allocation framework.

It determines:

- Who deploys intelligence

- Who scales intelligence

- Who overrides intelligence

- Who absorbs consequences

If governance is not implemented at the execution layer, execution will quietly become governance.

By the time organizations notice, authority will already have shifted.

What is governance in simple terms?

It refers to the systems, processes, and structures used to control how artificial intelligence is developed, deployed, and used within an organization. Instead of focusing only on policies, effective governance ensures that AI-driven decisions remain accountable, transparent, and aligned with organizational goals.

Why is it becoming important now?

AI systems are increasingly influencing decisions across hiring, lending, operations, forecasting, and automation. As AI gains decision-making influence, organizations must ensure that authority, responsibility, and oversight remain clearly defined and controlled.

Who should be responsible for governance in an organization?

It should be shared across multiple stakeholders, including:

Executive leadership

Risk and compliance teams

Technology and engineering teams

Legal and policy teams

Business unit leaders

This shared responsibility ensures balanced oversight and reduces the risk of authority concentration.

What are decision rights in AI governance?

Decision rights refer to identifying which decisions AI systems influence and who ultimately controls those decisions. Mapping decision rights helps organizations understand where authority has shifted and whether human oversight remains effective.

Rajashri’s work stands out because she approaches AI governance with real structural seriousness.

A lot of the field still treats governance as a mix of policy language, high-level principles, and public positioning. Rajashri does something much more valuable: she looks underneath the language and asks how accountability is actually structured, where authority actually sits, how risk moves through a system, and whether the claimed controls are real in practice.

That is a rare skill.

She has a strong way of seeing the architecture beneath the narrative — the decision rights, the operational bottlenecks, the override points, the incentive conflicts, the accountability gaps. That kind of analysis is what separates governance as vocabulary from governance as a real systems discipline.

What I appreciate most is that she brings rigor without superficiality. She is not just talking about governance. She is examining whether the system can actually support the governance being claimed. That makes her work unusually clear, credible, and needed in this space.